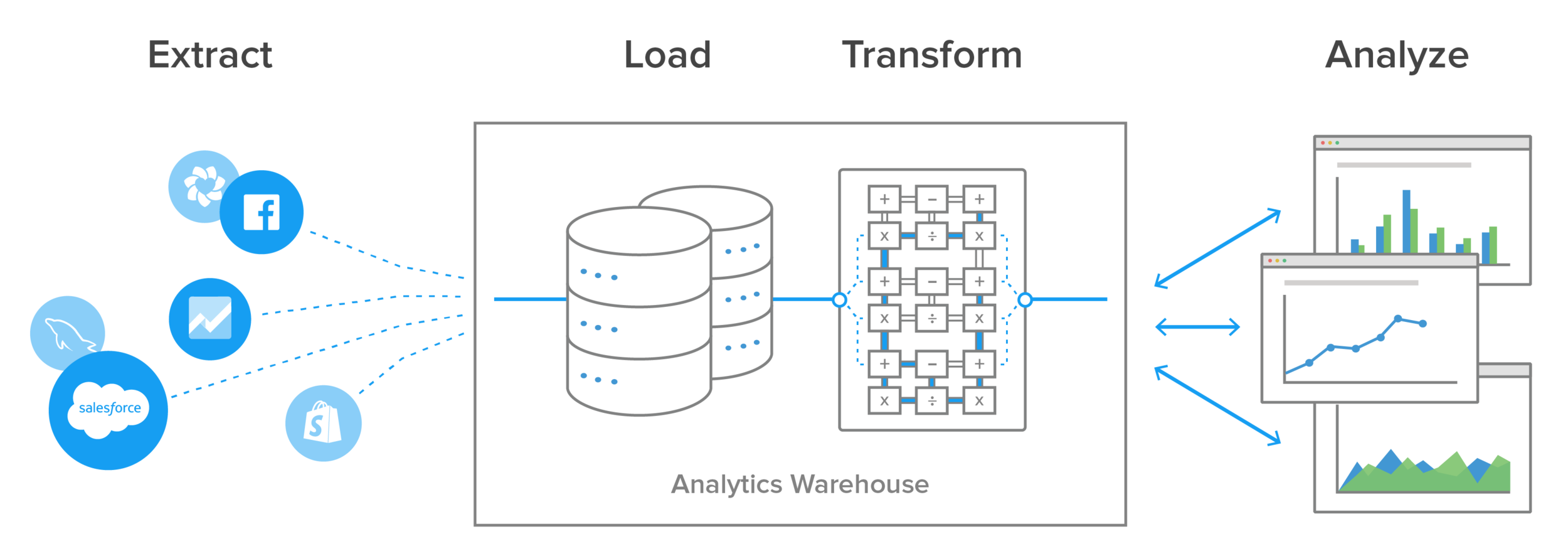

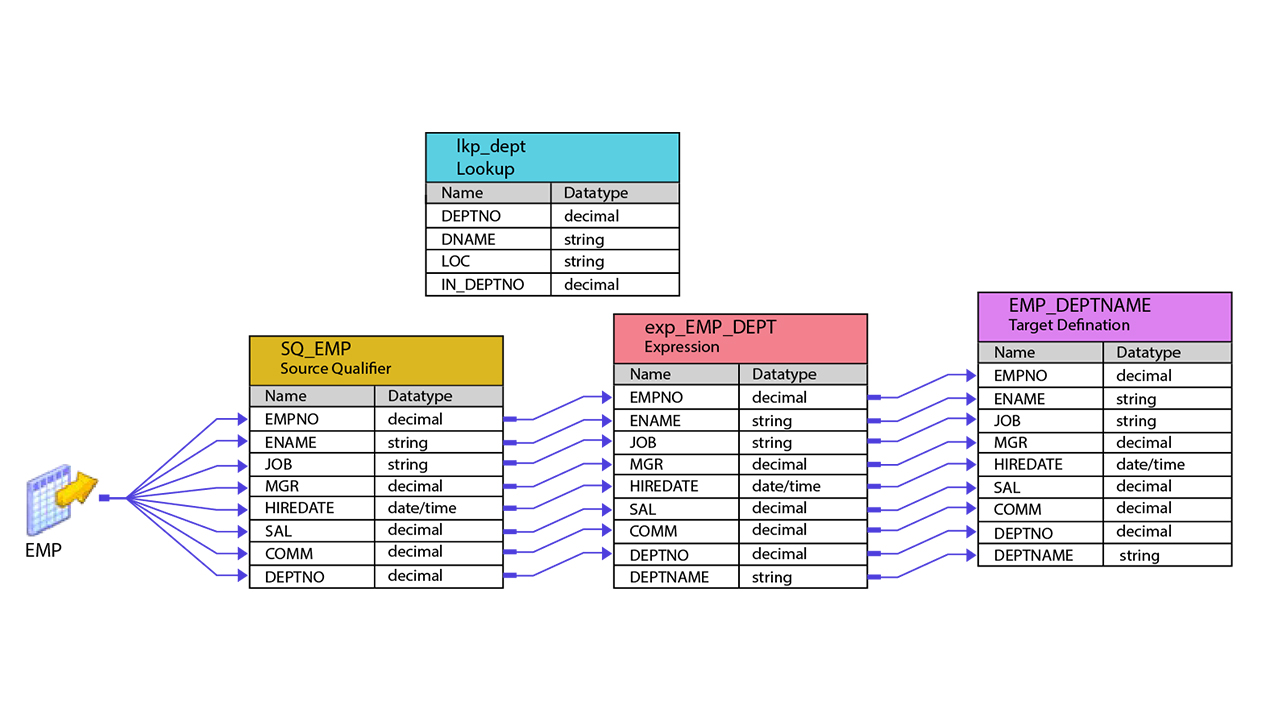

In the beginning, they were custom-built solutions. The Evolution of ETL Pipelines: From Custom Solutions to Modern ToolsĮTL pipelines have been an important part of data integration for many years. Finally, the load phase involves transferring the transformed data to a data warehouse or database where it can be analyzed. The transform phase involves cleaning, filtering, and enriching the data to make it suitable for analysis. The extract phase involves retrieving data from different sources, such as databases, APIs, or files. The three steps – extract, transform, and load – are critical for ensuring that data is consistent, accurate, and up-to-date.

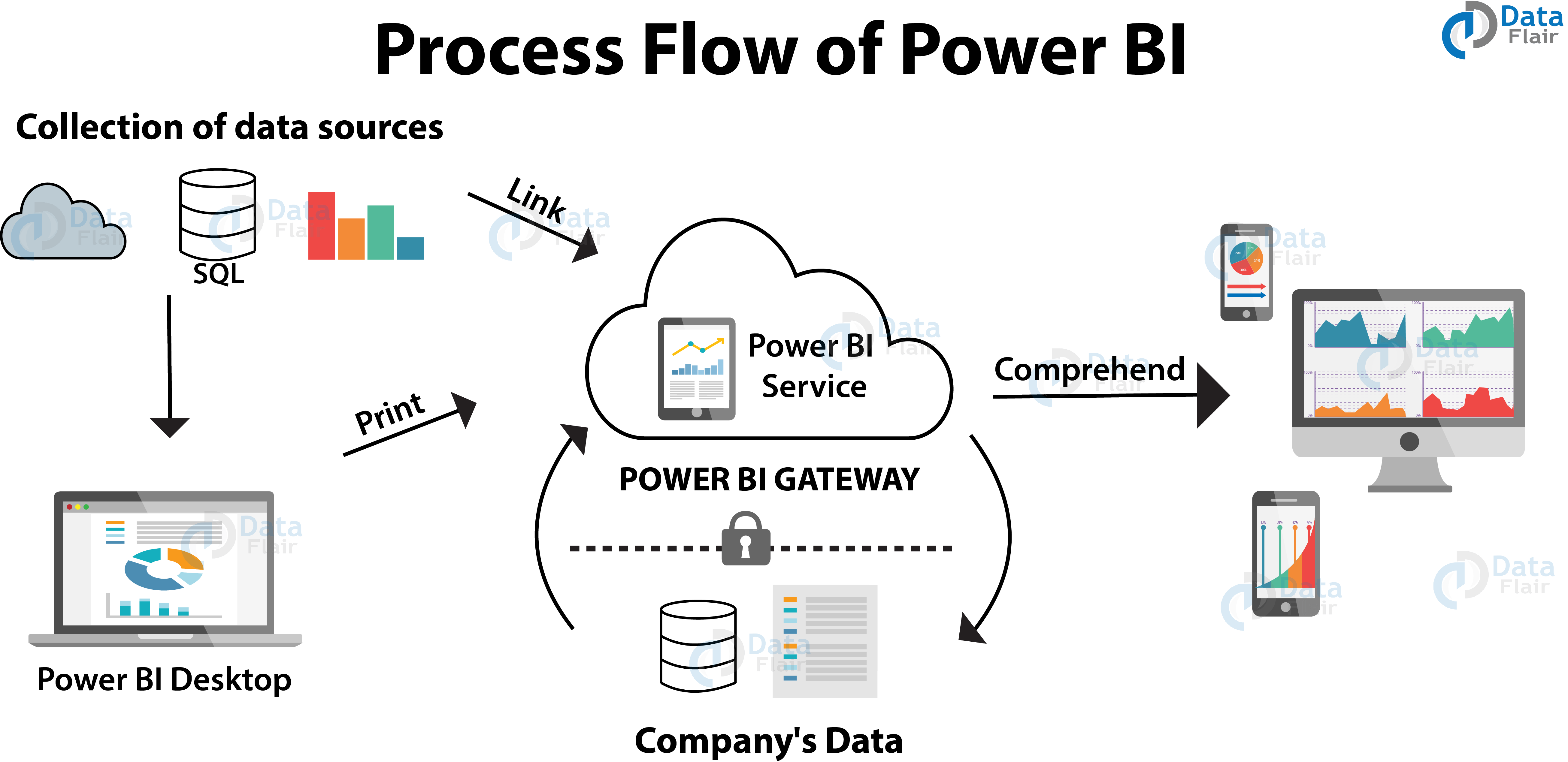

What is ETL Pipelines?Īn ETL pipeline is a series of processes that extract data from different sources, transform the data into a usable format, and finally load the data into a destination database or data warehouse for analysis. ETL stands for Extract, Transform, and Load, which are the three essential steps in the ETL process. They allow businesses to extract valuable insights from their data and make informed decisions. Understanding ETL Pipelinesīefore we dive into the comparison between custom ETL pipelines and modern ETL tools, let's first understand what ETL pipelines are and why they're integral to many analytics processes.ĮTL pipelines are the backbone of data-driven organizations. Whether you're a seasoned data professional or just starting your journey into the world of analytics, our aim is to highlight the transformative potential of the modern data stack, empowering you to make well-informed decisions for your organization's data management strategy. Additionally, we'll talk about the influence of industry trends and emerging technologies on the future of ETL processes, showing how the modern data stack is well-prepared to adapt to the rapidly shifting data landscape.Īs you go through this analysis, we hope to demonstrate the simplicity and agility that the modern data stack offers, encouraging you to think about the benefits of utilizing modern ETL tools for your organization's data integration needs. We'll dive into the capabilities of modern ETL tools, touching on subjects like flexibility, scalability, and cost-effectiveness. In this article, we're looking to showcase the perks of adopting the modern data stack and how it can revolutionize your organization's data integration processes.

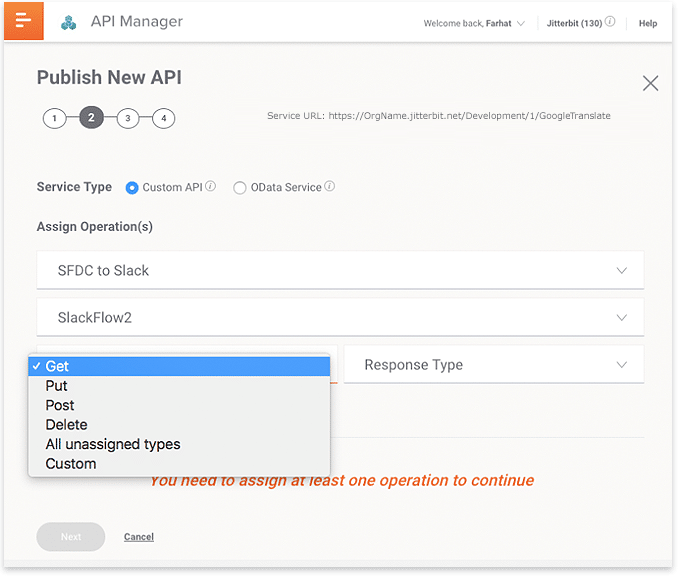

This new generation of tools, part of the modern data stack, has transformed how organizations tackle data integration, with a focus on user-friendliness, adaptability, and scalability. These modern ETL tools are packed with pre-built connectors and transformation functions, making it simpler than ever to set up and manage data pipelines. While these tailored solutions allowed companies to maintain a high level of control over their data processing, they often came with added complexity and upkeep challenges.Īs the data landscape has evolved and become more intricate, we've seen a steady rise in the number of ready-made ETL tools aimed at streamlining and automating data integration tasks. ETL pipelines were traditionally built as custom solutions, designed to fit each organization's specific needs. Custom ETL pipelines used to be the go-to solution, but with the emergence of modern ETL tools, there's been a shift in the conversation: are custom ETL pipelines becoming obsolete? Nowadays, the modern data stack offers increased simplicity and adaptability, giving organizations a fresh take on data integration.ĮTL has always played a significant role in data management, as organizations have depended on these processes to merge data from multiple sources, clean it up, and prep it for analysis. In the fast-paced world of data analytics, ETL (extract, transform, load) pipelines have been crucial for integrating and processing data from a variety of sources. Are Building Custom ETL Pipelines Outdated?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed